Last month I decided it was time to sunset Seasonality apps. It’s been years since I’ve updated the apps, and I don’t have time to maintain the project anymore. It was a tough decision to make, but I decided it was time to walk away (post with more details here). Unfortunately, shortly after making that decision, I learned that NOAA decided they were going discontinue the OpenDAP servers that Seasonality Pro uses to download model data. This presented a pretty big problem. I didn’t want the apps to stop working just weeks after discontinuing them, but building up an entire new data source is very time consuming. It took me weeks working full-time on it to get things just right when I wrote it in the first place.

I played with the idea of hosting a my own OpenDAP servers on Gaucho Software hardware. That would allow me to continue offering the data without changing the app code. The drawback is that it’d also likely use tens to hundreds of gigabytes of bandwidth every day. That might have been doable, but it wasn’t a great option long-term, so I started thinking about other options. NOAA was suggesting OpenDAP users to move to their Grib Filter API. That would save me the hosting cost, but wouldn’t be an easy task.

First I would need to figure out the Grib Filter API and find a way to translate between OpenDAP model variables to Grib Filter variables, which are named differently and referenced by atmospheric levels in a different way.

After the data is downloaded, it would need to be parsed. Grib is a binary data format that isn’t particularly easy to work with. I spent about 2 weeks writing an parser in Objective-C over a decade ago. It wasn’t a complete parser though, it only supported the model variables I was interested in at the time. I wasn’t keen on using that code this time around.

Finally, I would need to adapt the data to my model drawing code and make sure the performance was still good. I estimated it would take me 6-8 weeks of evenings and weekends to get everything right—a heck of a lot of work to put into a product that I’ve just discontinued.

But what if AI could help? Could I possibly vibe code this functionality; and would the code be good enough to want to include in the project? I decided I didn’t have much to lose, so I signed up for a $20 Claude Code account to give it a shot.

First up was figuring out the API details. I wrote up a prompt with as many details as possible, linked to all the documentation I could find, and then pointed Claude at the site for the GFS model and let it loose. Claude took about 45 minutes, asking me questions along the way, but ended up with some code to download Grib data that looked pretty reasonable and was ready to start testing. I spent another 45 minutes having it add all the other weather models Seasonality supports and create a few Swift CLI apps to test downloading the data so it could be unit tested. I ran out of my first 5 hour usage limit, but I finished about a week’s worth of work in one evening. Things were looking good so far.

The second night I wanted to work on the Grib decoder, in order to see whether the data I was able to download was even correct. I pointed Claude to the Grib spec and gave it some guidance along the way. I was impressed that it read through all the Grib code tables to try and cover decoding all the different parameters stored in the file. I remember this being particularly time consuming when I implemented my own decoder. The first draft of Claudes code wasn’t perfect, but it was better than expected. There were a couple of crashes along the way, most of which were from assumptions made about the size of the data. I asked Claude to add range checking and other guards in order to avoid that. With those bugs fixed, I asked Claude to download samples of all the weather models and to compute average/min/max values to see if they were reasonable. Then I told it to write unit tests for everything. That took up the rest of my second 5 hour time block, but saved me 1-2 weeks of tedious coding if I had done it myself. Up until this point, the Grib decoder was the biggest question mark to me. So the fact that Claude created a reasonable implementation in about an hour was the turning point where I started to think this whole process might just work.

The third day I spent most of the time cleaning up the implementation created during the first two days. I reviewed the code and told Claude to work on several improvements to make it more managable. Another 5 hour limit hit, but the code from the first two days was much cleaner at the end.

Day four was focused on translation. I have my own gridded data model that I use in Seasonality, so I needed to write code to convert the Grib data to a Seasonality grid. It was this day that I learned that one of the models (the HRRR) wasn’t being decoded correctly. Claude dug into the problem and determined that Apple’s ImageIO framework wasn’t decoding the JPEG2000 format used in the Grib for that model correctly. Looking into solutions, it looked like the best option would be to bring in a third party package that was written in C, and write a wrapper around it to use from the Grib decoder. Claude finished the code more quickly than it took to research alternatives to ImageIO. Another 5 hour limit hit, but HRRR data was now working.

Day five I got to see data in Seasonality Pro for the first time. Claude worked on some code to interface with the Grib filter client that was written above, download the data, decode it, convert it to a Seasonality grid, and pipe it through to the mapping engine. When it actually worked, I was geniunely excited. We needed a cache for the downloaded grids, so I had Claude work on that too using a Swift Data architecture. After a couple of tweaks, it seemed to be downloading smoothly, but there was a performance problem in the display code somewhere. I was at my 5 hour limit, so that would need to wait until the next day.

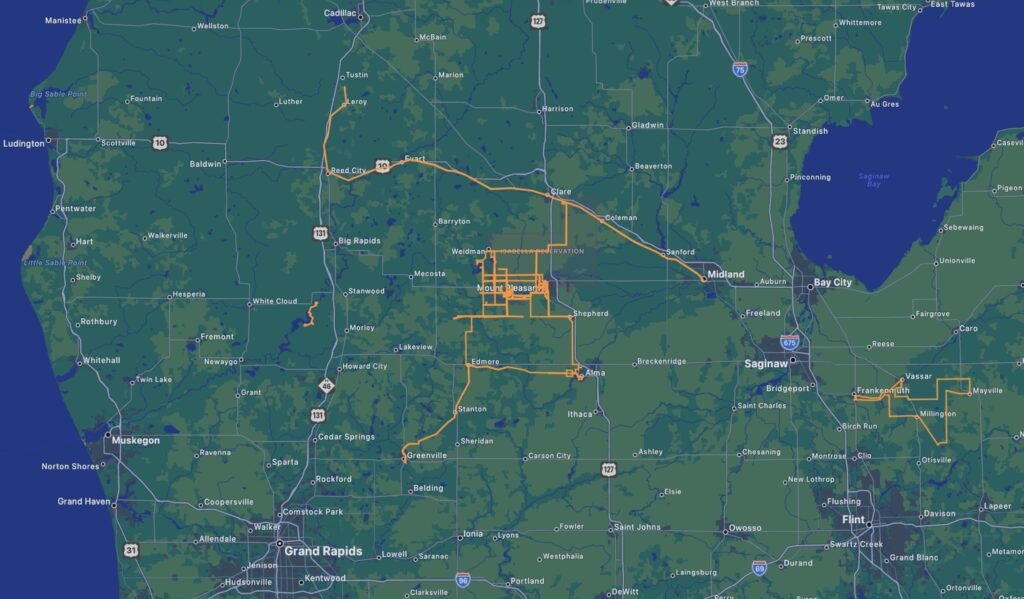

Day 6 started in Instruments, trying to figure out where the bottleneck was. I determined that it was taking a long time for the decoder to process one of the Grib data formats when it’s run on device. I asked Claude to look into it and a few minutes later it made changes for a 10x performance improvement. I followed up by asking Claude to optimize the other Grib data format decoders, which improved performance but weren’t quite an order of magnitude faster. I also ran into an issue with map projections that day. OpenDAP reprojected everything to an equirectangular projection automatically on the server before returning the data, but Grib files don’t do that. I needed a way to convert the Lambert Conformal data in the Grib to equirectangular. Again, I could have spent a few days working on on the geometry to accomplish this, but Claude had something working within minutes. At the end of day 6 I had maps with real data performing well in the app.

I’d like to say that on day 7 I rested, but the job wasn’t quite done yet. This was the day that I hit the 5 hour limit twice. Now that I had Grib data being displayed, I wanted to know which other places in the codebase were still using OpenDAP. I asked Claude what tasks remained to complete the OpenDAP to Grib Filter migration. It came up with a laundry list of places in the code that referenced OpenDAP, and ordered them by descending impact. Some of the items were easy for me to fix myself, others were more involved where I asked Claude to do it. Either way, I had over a dozen commits to the codebase that day and was finally ready to upload a test build to App Store Connect. I wrapped up the project on day 8. Finished the last bits of polish, and submitted the app for review.

Eight days. That’s all it took to completely replace the data layer in Seasonality Pro using Claude Code. That’s probably the best $20 I’ve ever spent. Is it perfect? Not really. OpenDAP allowed me to save bandwidth on cell connections by striding the data, which Grib Filter doesn’t allow. But that’s a server limitation, not a client one. On the client side, the code is arguably better than the code it replaced. I now have a data layer that pulls using async URLSession and decoding methods instead of multiple layers of Objective-C completion handlers. It feels so much more reliable.

I still have some work to do to clean up some lose ends before finally cutting my final ties to Seasonality. But I feel like I can leave the project on much better terms now than where I was at just a few weeks ago. Thanks for the help, Claude.